Summary

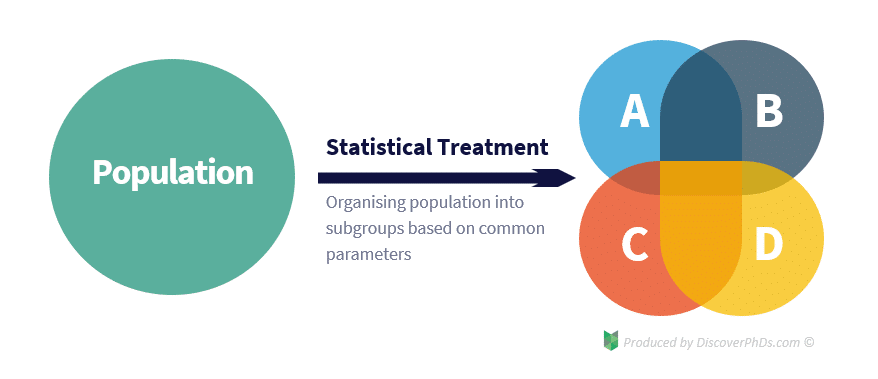

‘Statistical treatment’ is when you apply a statistical method to a data set to draw meaning from it. Statistical treatment can be either descriptive statistics, which describes the relationship between variables in a population, or inferential statistics, which tests a hypothesis by making inferences from the collected data.

Introduction to Statistical Treatment in Research

Every research student, regardless of whether they are a biologist, computer scientist or psychologist, must have a basic understanding of statistical treatment if their study is to be reliable.

This is because designing experiments and collecting data are only a small part of conducting research. The other components, which are often not so well understood by new researchers, are the analysis, interpretation and presentation of the data. This is just as important, if not more important, as this is where meaning is extracted from the study.

What is Statistical Treatment of Data?

Statistical treatment of data is when you apply some form of statistical method to a data set to transform it from a group of meaningless numbers into meaningful output.

Statistical treatment of data involves the use of statistical methods such as:

- mean,

- mode,

- median,

- regression,

- conditional probability,

- sampling,

- standard deviation and

- distribution range.

These statistical methods allow us to investigate the statistical relationships between the data and identify possible errors in the study.

In addition to being able to identify trends, statistical treatment also allows us to organise and process our data in the first place. This is because when carrying out statistical analysis of our data, it is generally more useful to draw several conclusions for each subgroup within our population than to draw a single, more general conclusion for the whole population. However, to do this, we need to be able to classify the population into different subgroups so that we can later break down our data in the same way before analysing it.

Statistical Treatment Example – Quantitative Research

For a statistical treatment of data example, consider a medical study that is investigating the effect of a drug on the human population. As the drug can affect different people in different ways based on parameters such as gender, age and race, the researchers would want to group the data into different subgroups based on these parameters to determine how each one affects the effectiveness of the drug. Categorising the data in this way is an example of performing basic statistical treatment.

Type of Errors

A fundamental part of statistical treatment is using statistical methods to identify possible outliers and errors. No matter how careful we are, all experiments are subject to inaccuracies resulting from two types of errors: systematic errors and random errors.

Systematic errors are errors associated with either the equipment being used to collect the data or with the method in which they are used. Random errors are errors that occur unknowingly or unpredictably in the experimental configuration, such as internal deformations within specimens or small voltage fluctuations in measurement testing instruments.

These experimental errors, in turn, can lead to two types of conclusion errors: type I errors and type II errors. A type I error is a false positive which occurs when a researcher rejects a true null hypothesis. On the other hand, a type II error is a false negative which occurs when a researcher fails to reject a false null hypothesis.